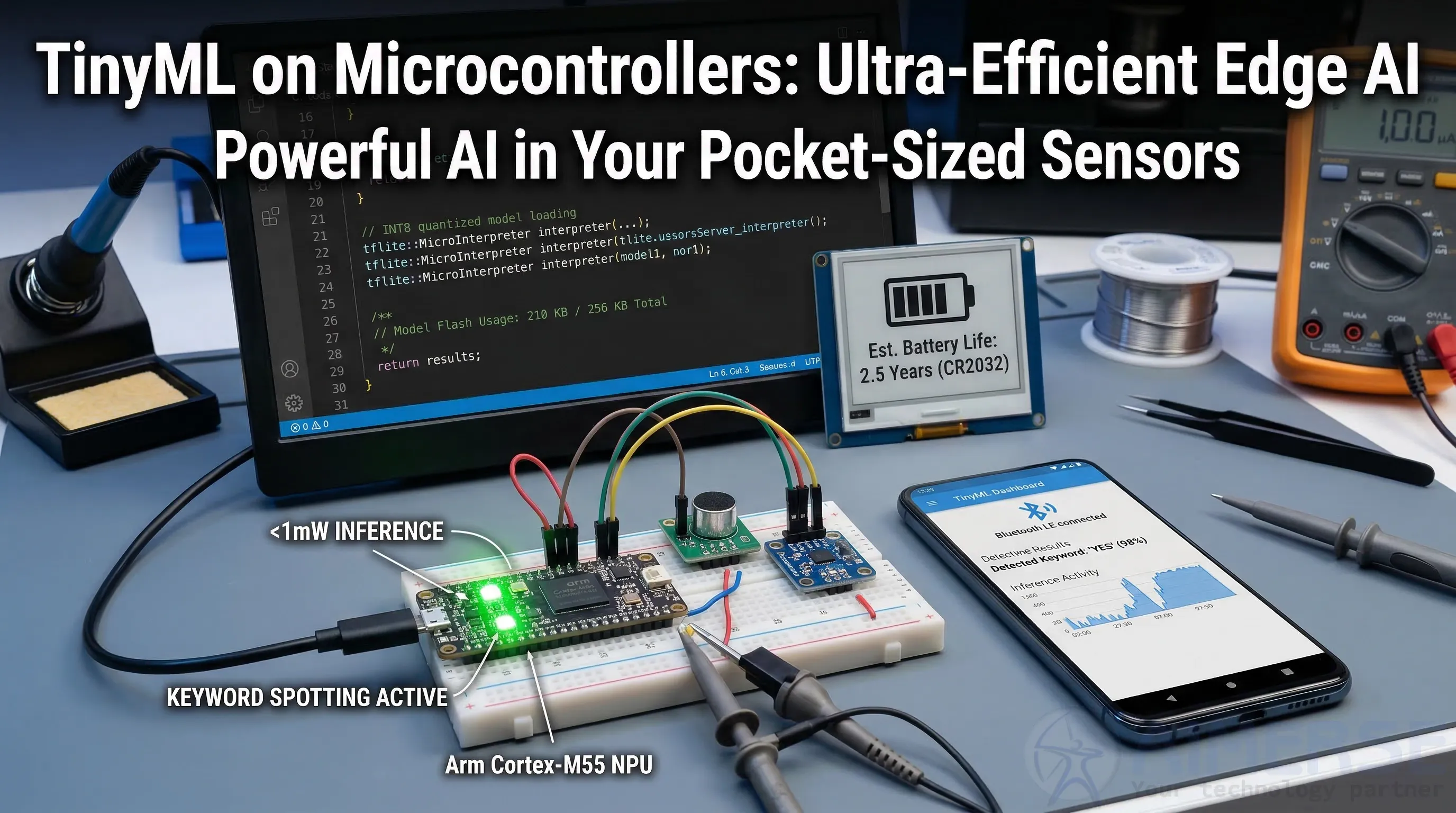

TinyML on Microcontrollers: Ultra-Efficient Edge AI.

TinyML deploys ML models on MCUs using <1MB RAM, <1mW inference for always-on edge intelligence.

TinyML compresses models via quantization (INT8), pruning (90% sparsity), and knowledge distillation to fit 256KB flash. TensorFlow Lite Micro/Edge Impulse run keyword spotting (95% accuracy), anomaly detection, image classification on Cortex-M55/RISC-V at 100μW/inference. 2026 sees Arm Ethos-U NPU in 70% MCUs; battery life extends years vs hours.

TinyML Techniques

- Quantization: FP32→INT8 cuts size 4x, speed 3x.

- Pruning: Remove 90% weights without accuracy loss.

- NAS: Auto-optimize models for MCU constraints.

- Federated: Distributed learning preserves privacy.

Node.js device management, Django analytics.

Applications

- Wearables: Gesture/activity recognition.

- Industrial: Vibration anomaly detection.

- Agriculture: Crop disease via smartphone camera.

- Smart Home: Wake-word detection.

10B devices by 2026; 1000x cloud cost savings.

Challenges

Memory limits (model surgery); drift (on-device retraining).

Deployment

- Edge Impulse data collection.

- Auto-optimize model.

- C++ deployment.

- OTA updates.

Conclusion

TinyML is revolutionizing the future of computing. By 2026, seamless integrations using React.js for device UIs, Node.js for device management, Django for ML model management, Laravel for rapid prototyping, and Java Spring Boot for scalable backends will make AI ubiquitous. Cognition is moving from the cloud to the physical world, and it is smooth and ubiquitous.